Lorenzo Torresani, Martin Szummer, Andrew Fitzgibbon

abstract:

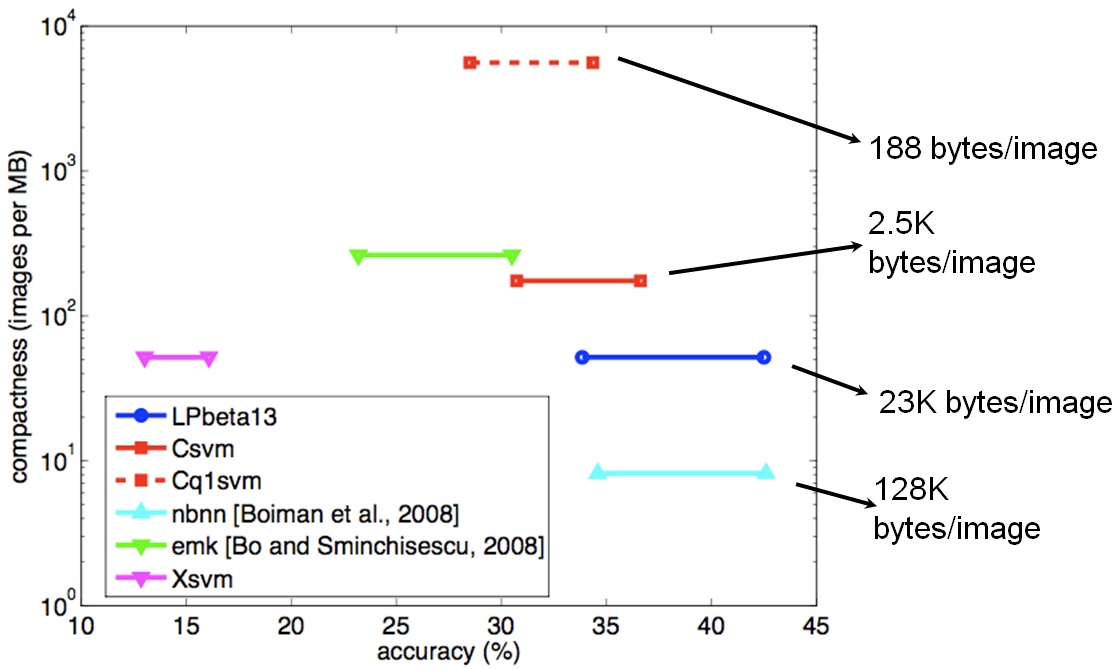

We introduce a new descriptor for images which allows the construction of efficient and compact classifiers with good accuracy on object category recognition. The descriptor is the output of a large number of weakly trained object category classifiers on the image. The trained categories are selected from an ontology of visual concepts, but the intention is not to encode an explicit decomposition of the scene. Rather, we accept that existing object category classifiers often encode not the category per se but ancillary image characteristics; and that these ancillary characteristics can combine to represent visual classes unrelated to the constituent categories’ semantic meanings. The advantage of this descriptor is that it allows object-category queries to be made against image databases using efficient classifiers (efficient at test time) such as linear support vector machines, and allows these queries to be for novel categories. Even when the representation is reduced to 200 bytes per image, classification accuracy on object category recognition is comparable with the state of the art (36% versus 42%), but at orders of magnitude lower computational cost.

paper:

Lorenzo Torresani, Martin Szummer, Andrew Fitzgibbon. Efficient Object Category Recognition Using Classemes. European Conference on Computer Vision, 2010. [PDF][bibtex]

results:

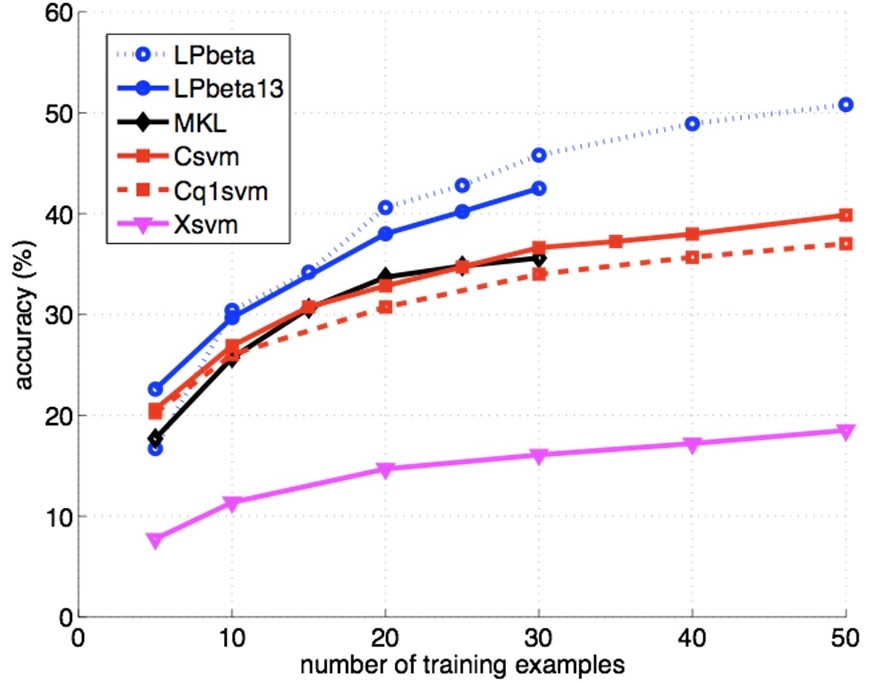

The figure above shows the categorization accuracy of different classifiers on the Caltech256 benchmark. Csvm denotes a linear SVM using continuous classemes while Cq1svm is a linear SVM using binarized classemes. Note that these simple classifiers achieve accuracy similar to Multiple Kernel Learning (MKL) as implemented in [Gehler and Nowozin, 2009]. Xsvm indicates a linear svm trained on the same low-level features that were used to create classemes. Its accuracy is over 50% lower than that achieved by classeme-based linear SVMs. LPbeta is the LP-β kernel combiner of [Gehler and Nowozin, 2009] and LPbeta13 is the same model trained with our low-level features (using 13 kernels instead of 39). These classifiers achieve slightly better accuracy but are over two orders of magnitude more expensive to train and test.

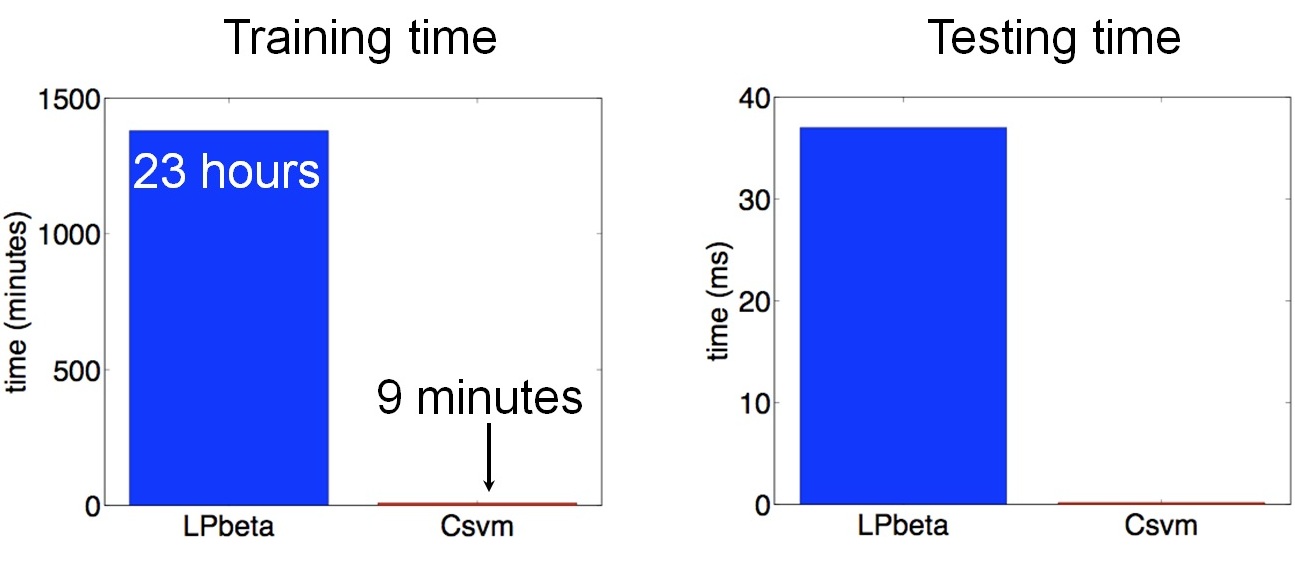

Computational cost comparison between LPbeta and Csvm: training and testing time for multiclass classifiers learned using 5 training examples for each of the Caltech256 classes.

Accuracy versus compactness of representation on Caltech256. On both axes, higher is better. (Note logarithmic y-axis). The lines link performance at 15 and 30 training examples. The compact size of our descriptor allows even large databases to be stored in memory rather than on disk.

software:

Software to extract classemes from images is available here.

classeme features:

The following files contain classeme vectors in Matlab format for different datasets (note that these features were extracted with a version of the software different from the one linked above; different versions cannot be mixed):

- Caltech256: caltech256_classemes_v1.0.tgz (599 MB)

- INRIA Holidays (the images can be downloaded from the INRIA webpage): holidays_classemes_v1.0.tgz (29.1 MB)

classeme training data:

Our classeme extractors are object classifiers learned from images retrieved by Bing Image Search. The list of 2659 classeme keywords and the retrieved image examples are available here:

- classeme keywords

- classeme training images (stored in 99 tar files, 46 GB in total)

highly weighted classemes:

The main objective of this project is the design of a compact image descriptor enabling efficient object class recognition. The goal of our classemes is not to assign semantic annotations to images or classes. Nevertheless, in order to gain insight into our representation, it is instructive (and fun!) to look at the most highly weighted classemes in linear classifiers learned for Caltech256 classes.